It’s time for some deep learning. Check out this list to pick up some new terminology—and learn a bit about the history of artificial intelligence in particle physics and astrophysics.

Artificial intelligence

Artificial intelligence refers to machines that have abilities we associate with human and animal intelligence, such as perceiving, learning, reasoning and problem solving.

First proposed in 1956, AI has since become an umbrella term for a wide variety of powerful and pervasive computing technologies—such as machine learning and neural networks—that continue to transform science and society. For example, in back-to-back breakthroughs announced in 2022, AI helped researchers predict the shapes of hundreds of millions of proteins from soil, sea water, and human bodies, cutting untold years off the time needed to identify and understand these molecular machines.

AI also plays an increasingly big role in particle physics and astrophysics, allowing scientists to control particle accelerators with unprecedented precision and analyze almost instantaneously enormous amounts of data from particle detectors and telescopes.

The definition of “artificial intelligence” has changed over time, says Daniel Ratner, head of the machine-learning initiative at SLAC National Accelerator Laboratory. “I think in the past, people used the term AI to refer to futuristic generalized intelligence, whereas machine learning referred to concrete algorithms.”

Ratner says he prefers a “big tent” definition of AI that encompasses everything from deep neural networks to statistical methods and data science, “because they all relate to each other as parts of a larger AI ecosystem.”

Machine learning

Machine learning is a type of artificial intelligence that detects patterns in large datasets, then uses those patterns to make predictions and improve future rounds of analysis.

All machine learning is based on algorithms, which in machine learning are rules for how to analyze data using statistics. A machine-learning system applies its algorithms to sets of training data and learns ways to analyze similar data in the future.

Because it dramatically speeds up discovery and can find solutions that humans consider counterintuitive, machine learning has been a tremendous boon for science. “I use AI in very small ways every day,” says Chihway Chang, an assistant professor in the department of astronomy and astrophysics at the University of Chicago. “It’s not even super fancy most of the time, it’s just part of the toolkit.”

AI streamlines searches for new materials, celestial objects and rare particle events, and it improves the performance of complex tools like particle accelerators, X-ray lasers and telescopes. “Some calculations are so slow that if you don’t use AI to accelerate them, you basically can’t do the science,” Chang says.

Physicists have been using machine learning for decades, says Nhan Tran, a physicist at Fermi National Accelerator Laboratory and the coordinator of the Fermilab AI project and the Fast Machine Learning research collective. “But the extent to which AI can now affect almost every part of the experiment—not just the data analysis part, but operations or simulation, or even the management of the data itself—that's really exciting.”

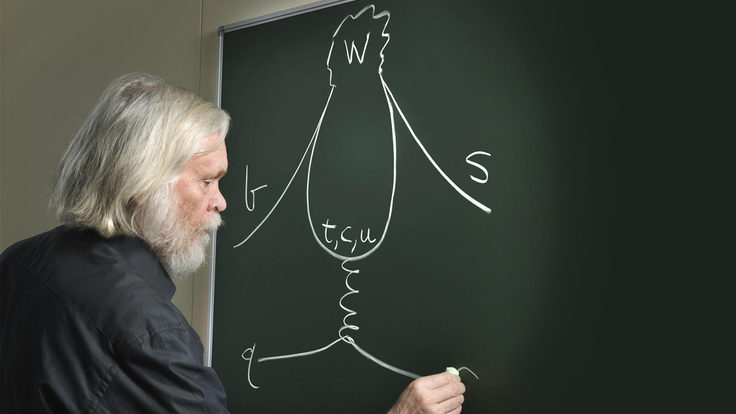

Boosted decision trees

Physicists have been using decision trees since the 1970s. Decision-tree algorithms work by running data through a series of decision points. At each point, the algorithm decides whether to keep or reject a piece of data based on criteria programmed into the system.

Think of the process as a tree being pruned; some branches remain and lead to smaller branches and leaves, while others are removed.

Boosted decision trees work the same way as decision trees, except they run data through multiple trees instead of just one before deciding which data to reject. Information from each tree is used to modify the next one, and the next one, until the difference between signal and noise becomes clear.

The trees work well with data that’s tabulated in rows and columns. And since each decision is binary—keep or reject?—it’s fairly easy to understand how decision trees work. “Physicists didn’t trust machine learning for a long time,” says Javier Duarte, a physics professor at the University of California, San Diego. “That’s why we liked tree-based methods. It’s making decisions the way we normally do.”

The MiniBooNE experiment at Fermilab was an early adopter of boosted decision trees in the early 2000s, using them to study neutrino oscillations.

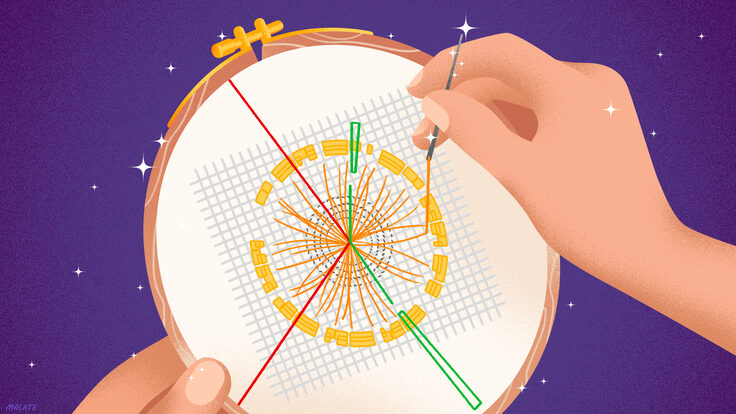

But boosted decision trees are probably best known for their contribution to the 2012 discovery of the Higgs boson.

Teams from the CMS and ATLAS experiments at CERN’s Large Hadron Collider used boosted decision trees to classify collision events and separate signals from background noise. “It showed we could use these techniques to search for new particles in a well calibrated way that we can understand,” says Lindsey Gray, a scientist at Fermilab. “It was a huge milestone and led to an explosion of using these algorithms in the subsequent decade.”

Reinforcement learning

Reinforcement learning in computing began in the 1980s. This machine-learning technique optimizes decision-making by rewarding desired behaviors and punishing undesired ones. Its algorithms train by exploring their environment and acting through trial and error, receiving “reward signals” for certain behaviors.

This technique works best for sequential decision-making in real-time situations, such as training an algorithm to play games or giving an autonomous vehicle the ability to plan a route and perceive its environment. Computer program AlphaGo’s unprecedented 2015 victory over a human player of the board game Go was due in part to reinforcement learning.

Training reinforcement-learning algorithms is time-consuming and difficult because the model must simulate all the factors in an environment. “It hasn’t been used that much in particle physics yet, since it is so challenging,” Duarte says. Even when it’s applied to just the right kind of problem, he adds, “some argue there are ways to turn these problems into different kinds of problems that don’t need reinforcement learning.”

Reinforcement learning has been applied to controlling and enhancing the performance of particle accelerators and to “grooming” particle jets coming out of collisions by pruning away unwanted radiation. Astronomers have used it to find the most efficient way to collect data with their telescopes and to optimize adaptive optics systems that sharpen a telescope’s view. And in quantum information science, reinforcement learning has been applied to controlling quantum processes, measuring quantum devices, and controlling quantum gates in quantum computers.

Neural networks

A neural network is a type of machine learning that’s loosely modeled after the human brain. Once a neural network is trained on a large set of data—whether from particle collisions or astronomical images—it can automatically analyze other complex datasets, almost instantaneously and with great precision.

A neural network consists of hundreds to trillions of interconnected processing nodes. Data travels through the nodes in one direction, with each one receiving signals from nodes in the layer above it and passing the information along to nodes in the layers below it.

In the brain, neurons fire only after they have accumulated a certain amount of chemical information from surrounding neurons. The nodes in a neural network gather incoming signals and assign each of those signals a “weight” based on how important it is. Once the weighted signals are combined, the node processes the information and provides an output. This system allows the network to learn from examples.

When a neural network is being trained, the weights that individual data signals accumulate as they travel from node to node and the threshold weights they need to reach before firing are initially set to random values. Those weights and thresholds are continually adjusted until the data yields consistent results.

Trainable neural networks were first proposed in the 1950s and have gone through several boom-and-bust cycles of interest in the scientific community. Throughout the 1990s and into the early 2000s, researchers in high-energy physics used early neural networks for low-level pattern recognition in detectors and for determining the properties of events.

Everything changed around 2012, says Benjamin Nachman, head of the Machine Learning for Fundamental Physics Group at Lawrence Berkeley National Laboratory. “There was this transition,” he says. “Tools became available that weren’t available before. We had been using neural networks since the ’90s, but the neural networks of the ’90s are not the neural networks of today. We can do things now that were unimaginable before.”

Part of the change came from the introduction of the rectified linear activation function (also known as ReLU, a key innovation in the method of training neural networks), and part came from the adoption of electronic circuits called graphics processing units. GPUs were originally developed to speed up the rendering of graphics in video games by performing multiple processes in parallel. But integrating them into neural networks allowed scientists to increase their computing power and perform what they called “deep learning.” Around the same time, physicists started to generate the massive datasets needed to train them.

Today, scientists use neural networks to analyze tracks left by neutrinos, light distorted by hidden phenomena in space, and energy transformed into matter at the Large Hadron Collider.

Convolutional neural networks

Inspired by the animal visual cortex, convolutional neural networks use several layers of neural networks to find features in images. The first layers find features like color and edges. Subsequent layers recognize more complex elements within the image, such as patterns compatible with faces. For the last decade, computer vision—the broad term for technologies that enable computers to identify objects and people in images and video—has been propelled by CNNs.

One big advantage of CNNs is that they can learn the features of raw, unprocessed images directly from data without human input. They work well with data that can be presented in two dimensions, such as photographs and particle event displays, and they can also classify audio data. “Computer vision really jump-started how we think about deep learning in physics,” says Michael Kagan, a lead staff scientist at SLAC.

Today, CNNs are widely used in astrophysics, where images of the night sky produce enormous amounts of data. CNNs have helped scientists find gravitational lenses and derive the ages, masses and sizes of star clusters.

In high-energy physics, scientists used CNNs as early as 2015 to tag and classify jets of particles produced by high energy collisions at the LHC. Initial excitement about CNNs eventually gave way to excitement about other methods, including graph neural networks, that are more suited to the kinds of data high energy physics experiments produce.

Graph neural networks

Graph neural networks are sets of geometric deep-learning algorithms that learn from graphs. A graph represents a set of nodes (pieces of data), and the relationships between them, known as edges.

Though GNNs were introduced in the early 2000s, physicists didn’t start using them to analyze experimental data until 2019. GNNs have been helpful in tracking detector hits, classifying particle jets and understanding detector events involving multiple particles.

Unlike convolutional neural networks, which work only with data that’s presented in a regular grid, GNNs can analyze datasets that have irregular, 3D geometric shapes. This makes GNNs a great tool for particle physics, where datasets from particle accelerators often come from multiple detectors that track particles in different ways. GNNs have been widely used to identify jets, classify particle interactions, determine whether a particle had cosmic origins, and search for Higgs bosons decaying to bottom quarks.

They’re going to become increasingly important as the LHC operates at higher luminosity and the particle interactions that scientists reconstruct become more and more complex, Gray says. “Once we understand how to use this tool very deeply, we will be able to extract even more interesting science from our detectors, since we will be able to do better reconstruction in busier environments,” he says. “It has completely revolutionized the way we approach our data and how we conduct our data analysis.”

Foundation models

As the machine-learning community continues to innovate, physicists have also begun to work with architectures even more advanced than CNNs and GNNs—"most notably the transformer, which is at the center of state-of-the-art AI applications in industry,” Nachman says. “This is the backbone of most foundation models.”

Based on complex neural networks, foundation models can identify and generate images, answer natural language questions, and predict the next item in a sequence. The difference between foundation models and general deep learning neural networks is scale: Foundation models are trained on datasets more massive than any available in the past, and they are also often trained without any pre-existing knowledge of what is in the data.

Foundation models became popular in 2022, when OpenAI released a large language model called ChatGPT that writes natural language answers in response to prompts. Other foundation models like DALL-E generate images. Physicists—followed shortly thereafter by astrophysicists—have been working on generative models since 2017.

Foundation models require huge amounts of data but can improve AI performance on many tasks involving natural language processing and computer vision. They can be used to write new text, summarize a research paper, program code and identify an image.

However, building and training foundation models requires enormous amounts of computing time and huge datasets. And today’s foundation models are notoriously unreliable; for instance, large language models often come up with the wrong answer, a problem people in the field call “hallucination.”

Since this paradigm is so new, physicists are still playing around with it, trying to figure out how it might help them do things like generate code for experiments or find galaxies with certain features. They’re also trying to develop foundation models that are trained on the shared features of different physical systems, such as conservation laws.

The future could lie in a hybrid model that combines the power of foundation models with the structured knowledge of physics.

“A lot of people are thinking about how to integrate our knowledge with these big models,” says Kagan, who is part of a growing group of physicists developing new AI methods tailored for physics. “Hybrid systems could have the potential to help us design future detectors, analyze data, or even, far in the future, help us come up with hypotheses. It’s an exciting time to develop AI for specific kinds of high energy physics challenges.”