Almost a decade remains before the Large Synoptic Survey Telescope sees first light, and construction has yet to begin on most of the telescope’s hardware. Even so, astrophysicists are already developing methods for analyzing the rush of data LSST will produce. In a survey this large and this advanced, data management is just as important as cameras and mirrors.

From atop Cerro Pachón ridge in Chile, LSST will conduct the world’s most ambitious astronomical imaging survey yet. Astronomy surveys have been generating catalogs of data since the 1940s. They help scientists study the universe on a large scale and collect vast amounts of data more quickly than it would take with individual observations. LSST will compile a catalog that dwarfs all current survey catalogs combined.

A tribute to advanced technology, the telescope’s powerful camera will capture light from objects 100 times fainter than those observed in any current survey, giving scientists a peek into the earliest developmental stages of the universe. The unique, triple-mirror system will scan the entire southern sky in three nights, faster than any other telescope. And each night, the telescope will collect about 15 terabytes of data. The Sloan Digital Sky Survey, the largest optical survey to date, took 10 years to gather the same amount.

All of this data will allow scientists to create a detailed three-dimensional map of our universe and address four main science goals: to explore dark energy, to investigate galactic structure, to monitor objects’ movements on short timescales and to track near-Earth asteroids.

To handle the gargantuan quantity of data from the moment the telescope first turns on around 2020, a complex system of computer simulations is already well underway. The system has three principle components: the Image Simulator, which will produce mock LSST images to anticipate image quality; the Operations Simulator, which will predict the optimal viewing schedule each night; and the Calibrations Simulator, which will calculate the true brightness of objects to ensure accurate science. Combined, the simulators will help scientists get as much science as possible from LSST data.

For astrophysicists, the LSST simulators are more comprehensive than any before. Such sophisticated simulations were, until recently, primarily used in particle and high-energy physics research.

“In a particle accelerator, you have a gazillion particles coming out with jets, tracks and lots more,” says Fermilab scientist and LSST collaborator Scott Dodelson. “Similarly with LSST, things are becoming very crowded as we go deeper, so we need sophisticated algorithms to even be able to identify what a galaxy is, and we need to test these algorithms with simulations.”

Each simulator serves a different purpose, but all are inherently connected and necessary for LSST’s future success.

The Image Simulator

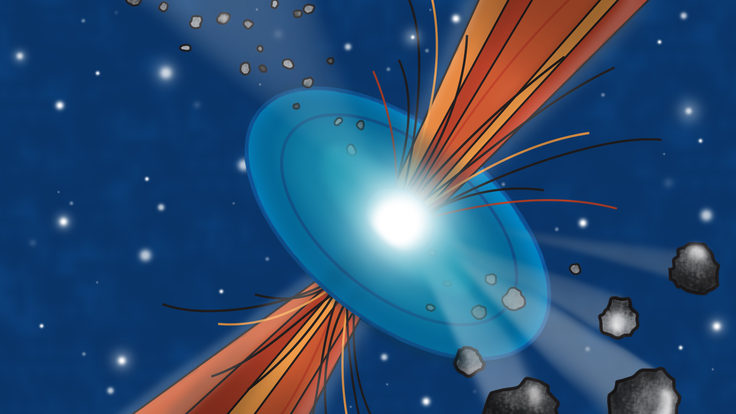

If all goes well, LSST will collect about 1700 images per night. John Peterson, an associate professor of physics at Purdue University, is in charge of finding out how many photons will make up every pixel of each three-gigapixel image. A number of factors including cloud cover, atmospheric turbulence and the telescope’s optical system can affect photon density and thus influence image quality. Image quality is important when determining, for example, how accurately astrophysicists can measure sizes and shapes of distant galaxies to better understand dark energy.

Peterson and his colleagues begin by pulling data from previous surveys and observations. The data includes information about the position, brightness and other parameters of hundreds of thousands of stars, galaxies and additional sources. They then simulate the light from these objects as it would travel through LSST’s mirrors and lenses.

When they’re through, they have an image that resembles what LSST will take over each 15-second exposure at a specific point in space and time. On average, each giant image contains about 1 trillion photons. Couple that with the 1700 images LSST will capture per night, and that gives roughly 1 quadrillion photons.

“We often run simulations on thousands of computers at a time because just to simulate one of those giant images would take about a thousand hours for one computer,” Peterson says.

In this way, Peterson and his colleagues have produced nearly 12,000 synthetic, digital images that are equivalent of six nights of LSST data.

“LSST will image the same part of the sky hundreds of times,” Peterson says. “So we just want to simulate at least a few patches of sky hundreds of times to make sure we can analyze that with state-of-the-art algorithms.”

After the images are produced, they go to a data management group that compares each synthetic object with its real counterpart in the catalogs. The group uses this information to design and test image processing algorithms, which will ensure that catalogs of LSST data are organized and managed correctly for scientific research.

Creating a synthetic universe of this sort has other benefits as well. Deborah Bard, a research associate at SLAC National Accelerator Laboratory, uses the mock images to determine how accurately she will be able to measure the shapes of galaxies. In turn, this will help her learn about dark energy and its impact on galactic evolution. With her models already in place, she expects to have her first results shortly after LSST comes online.

The Operations Simulator

Because LSST will not accept proposals for individual observing time, strategy for its 10-year observing schedule can be planned in advance. Abhijit Saha, an LSST operations simulation scientist at the National Optical Astronomy Observatory, and his Operations Simulator team are accepting input from the larger astrophysics community on what’s most important to observe, when to observe it and for how long. This will help the team predict how best to reach LSST’s four science goals.

“We know the four general science goals and we know the kinds of strategies scientists want,” Saha says. “But we’re also relying on scientists’ input to improve the algorithms” that simulate the telescope’s behavior.

Saha and his team are now using OpSim to simulate how a 10-year run may go. The simulations produce a database of lists that contain hundreds of millions of locations the telescope will point to for each 15-second exposure. This type of data will help Saha and his team improve the efficiency of each night’s observations.

“Just a little gain can mean a lot over 10 years,” Saha says. “All of this prep work will pay us back when we start making observations.”

But not all of LSST’s observations can be planned in advance. The region of sky under study may become blocked by clouds. Or an asteroid—one of LSST’s observing targets—may zip into the picture, requiring immediate attention. In addition to setting the base observing plan, OpSim also simulates such scenarios and determines on the fly how best to schedule new observations without jeopardizing others.

“It’s like a chess game,” says Saha. “Each move commits you to something else later on.”

The Calibrations Simulator

Looking at a star or galaxy can be like looking at headlights through a dense fog. You can’t determine the true brightness and hence the distance between you and the lights without clearing the fog. Moreover, the fog can thin or thicken with time, changing the light’s apparent brightness.

Similarly, clouds and a variety of aerosols in Earth’s atmosphere make it impossible for astrophysicists to see the true brightness of a night-sky object. Fortunately, a technique called photometric calibration offers a way around the fog.

Photometric calibration is a way for scientists to use algorithms to subtract away all of the gas and dust shrouding an image, thereby calculating an object’s true brightness. Instead of expecting each astrophysicist to apply photometric calibrations on his or her own, as is common practice, LSST’s data systems will automatically apply photometric calibrations to every object in each LSST image. That will be a huge convenience, says LSST calibration scientist Tim Axelrod. Axelrod is helping design CalSim, which simulates the wide variety of effects that change the apparent brightness of astronomical objects, and the algorithms used to remove those effects.

“Our goal is to be completely invisible,” Axelrod says. “We hope that people doing their science with LSST will never even need to think about the calibration process.”

Like John Peterson and the ImSim team, Axelrod and his CalSim team use data catalogs to view stars that have been studied and observed many times, so their true brightness is well understood. The team then calculates the photometric corrections for other objects based on how these well-understood stars look at different times and nights.

Many parameters affecting image brightness will change as LSST observes. This includes weather, the moon’s location, and the temperature of the telescope’s light detectors, which affects how accurately the detectors read information and hence how bright an object will appear in an image. Axelrod’s goal is to create simulations that will take input from these various parameters, subtract them from the image, and thus calibrate the true brightness of objects within an image.

So far, Axelrod has designed simulations using 5 million stars for photometric calibration. However, even more stars would mean an even better correction. Before LSST takes first light, Axelrod would like to build up a simulation that uses 100 million stars.

Looking ahead

When all three of the LSST simulators come together, the result is a complete picture showing scientists what LSST is capable of and how they can gain as much scientific insight from LSST data as possible.

The telescope’s commissioning process will last for two years following first light. Chuck Claver, a systems scientist at NOAO who oversees simulation efforts, is using certain aspects of the three overarching LSST simulators to anticipate and potentially prevent pitfalls during the process. This is one of the first times astrophysicists are using simulations this far in advance to ensure a smooth commissioning process, he says.

“Commissioning the entire LSST system in two years is awfully ambitious, and I think simulations will continue to play a pivotal role in doing that successfully,” Claver says.

The simulations, particularly ImSim and OpSim, are also helping engineers design the camera and telescope optics while also foreseeing potential construction obstacles. So in addition to helping scientists forge ahead in their research, LSST simulators also ensure the telescope’s success from the start of construction to the final observation.

LSST will capture the universe in unprecedented detail. Scientists expect to fill the void of uncertainty surrounding dark matter and dark energy with never-before-seen galaxies and deep LSST images. In addition to ushering in a new age of large telescopes and sky surveys, LSST will bring the science community closer to solving the current mysteries of our universe and, perhaps, conjure up new ones. The concerted efforts of scientists, working on both simulations and other projects, will be key to bringing this new age into focus.