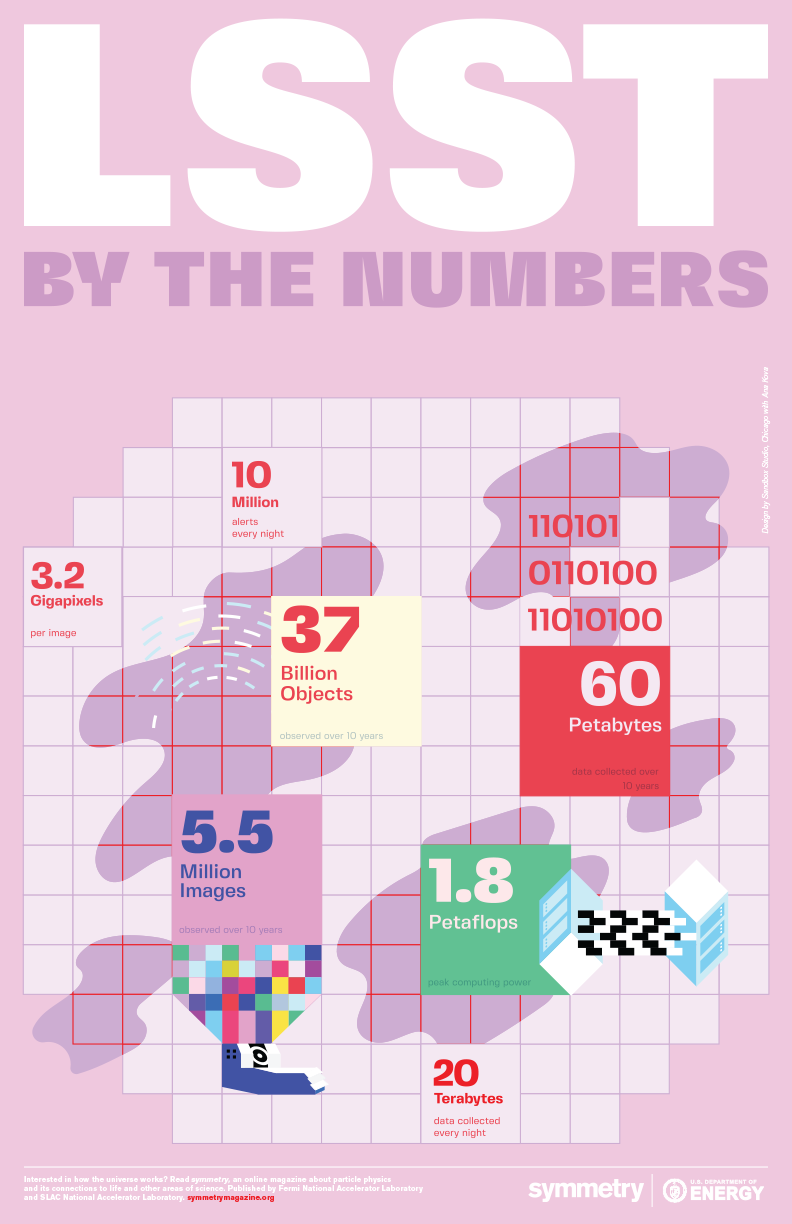

The Large Synoptic Survey Telescope—scheduled to come online in the early 2020s—will use a 3.2-gigapixel camera to photograph a giant swath of the heavens. It’ll keep it up for 10 years, every night with a clear sky, creating the world’s largest astronomical stop-motion movie.

The results will give scientists both an unprecedented big-picture look at the motions of billions of celestial objects over time, and an ongoing stream of millions of real-time updates each night about changes in the sky.

Accomplishing both of these tasks will require dealing with a lot of data, more than 20 terabytes each day for a decade. Collecting and storing the enormous volume of raw data, turning it into processed data that scientists can use, distributing it among institutions all over the globe, and doing all of this reliably and fast requires elaborate data management and technology.

International data highways

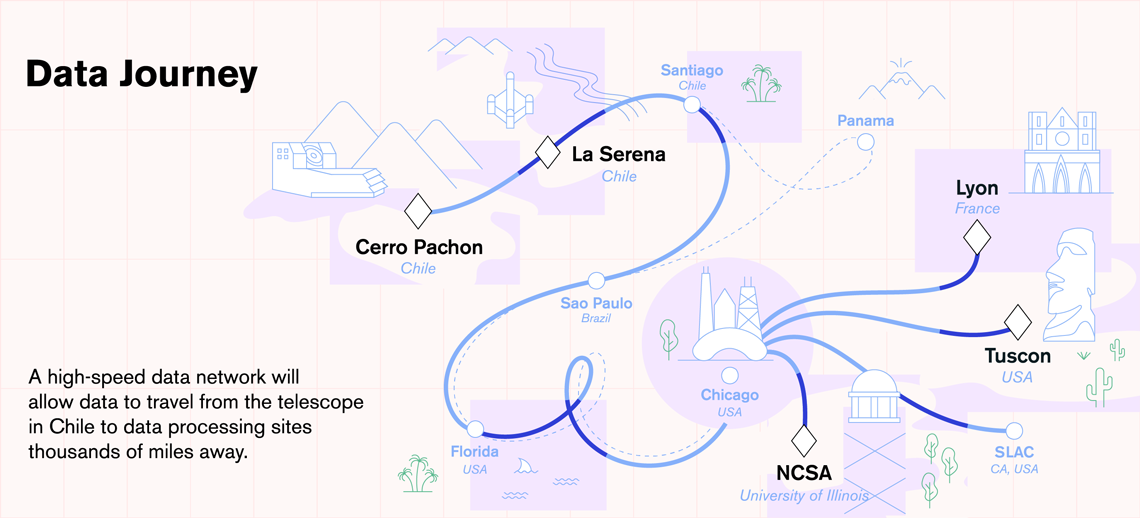

This type of data stream can be handled only with high-performance computing, the kind available at the National Center for Supercomputing Applications at the University of Illinois, Urbana-Champaign. Unfortunately, the U of I is a long way from Cerro Pachón, the remote Chilean mountaintop where the telescope will actually sit.

But a network of dedicated data highways will make it feel like the two are right next door.

Every 40 seconds, LSST’s camera will snap a new image of the sky. The camera’s data acquisition system will read out the data, and, after some initial corrections, send them hurtling down the mountain through newly installed high-speed optical fibers. These fibers have a bandwidth of up to 400 gigabits per second, thousands of times larger than the bandwidth of your typical home internet.

Within a second, the data will arrive at the LSST base site in La Serena, Chile, which will store a copy before sending them to Chile’s capital, Santiago.

From there, the data will take one of two routes across the ocean.

The main route will lead them to São Paolo, Brazil, then fire them through cables across the ocean floor to Florida, which will pass them to Chicago, where they will finally be rerouted to the NCSA facility at the University of Illinois.

If the primary path is interrupted, the data will take an alternative route through the Republic of Panama instead of Brazil. Either way, the entire trip—covering a distance of about 5000 miles—will take no more than 5 seconds.

Curating LSST data for the world

NCSA will be the central node of LSST’s data network. It will archive a second copy of the raw data and maintain key connections to two US-based facilities, the LSST headquarters in Tucson, which will manage science operations, and SLAC National Accelerator Laboratory in Menlo Park, California, which will provide support for the camera. But NCSA will also serve as the main data processing center, getting raw data ready for astrophysics research.

NCSA will prepare the data at two speeds: quickly, for use in nightly alerts about changes to the sky, and at a more leisurely pace, for release as part of the annual catalogs of LSST data.

Alert production has to be quick, to give scientists at LSST and other instruments time to respond to transient events, such as a sudden flare from an active galaxy or dying star, or the discovery of a new asteroid streaking across the firmament. LSST will send out about 10 million of these alerts per night, each within a minute after the event.

Hundreds of computer cores at NCSA will be dedicated to this task. With the help of event brokers—software that facilitates the interaction with the alert stream—everyone in the world will be able to subscribe to all or a subset of these alerts.

NCSA will share the task of processing data for the annual data releases with IN2P3, the French National Institution of Nuclear and Particle Physics, which will also archive a copy of the raw data. The two data centers will provide petascale computing power, corresponding to several million billion computing operations per second

The releases will be curated catalogs of billions of objects containing calibrated images and measurements of object properties, such as positions, shapes and the power of their light emissions. To pull these details from the data, LSST’s data experts are creating advanced software for image processing and analysis. They are also developing machine learning algorithms to help classify the different objects LSST finds in the sky.

Annual data releases will be made available to scientists in the US and Chile and institutions supporting LSST operations.

Last but not least, LSST’s data management team is working on an interface that will make it easy for scientists to use the data LSST collects. What’s even better: A simplified version of that interface will make some of that data accessible to the public.