A visualization of the LSST. Image credit: Todd Mason, Mason Productions Inc./LSST Corporation

This story first appeared in iSGTW on October 13, 2010. It discusses the computing needs of the Large Synoptic Survey Telescope, which astrophysicists hope to use in their search for dark matter and dark energy, among other things.

The Large Synoptic Survey Telescope to be constructed in Chile will incorporate the world’s largest digital camera, capable of recording highly detailed data more quickly than any other telescope of comparable resolution.

For the scientists working on the project, that all amounts to an exciting opportunity to learn more about moving objects (including monitoring asteroids near the Earth), transients such as the brief conflagrations of supernovae, dark energy, and the structure of the galaxy.

For computing specialists, it means more data. A lot more data.

The LSST will take between 1000 and 2000 panoramic 3.2 gigapixel images per night, covering its hemisphere of the sky twice weekly. Along with daytime calibration images, this will amount to 20 terabytes of data stored every 24 hours.

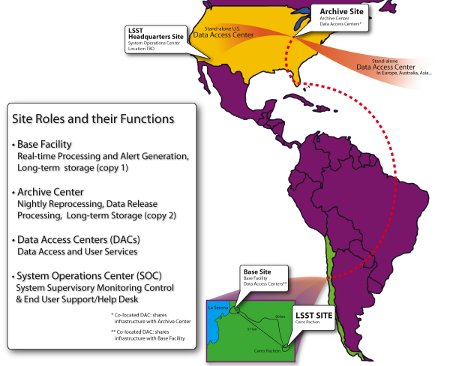

It’s a long journey from the summit of Cerro Pachon in Chile, the future site of the telescope, to the hundreds of research papers that the telescope’s data will inspire over its mandated ten-year lifespan. The journey begins with around-the-clock shifts to monitor the instruments and data for quality control. Scientists will be able to do shifts from either the summit site’s control room, or from the remote control room at the base site in La Serena, Chile; data is transmitted between the two sites via dedicated 10 gigabits per second fiber optic lines.

At the base site, approximately 3000 computing nodes with 16 cores each wait to make a rapid analysis of the data as it comes in.

“We have 60 seconds, basically, to do an initial reduction of that data and find any sort of astronomical transient events,” explained Jeff Kantor, project manager of data management for LSST. “And of course we have to distinguish those that are true transients from asteroids and other moving objects.”

As those transient objects are identified, subscribing scientists will receive alerts. This gives them a chance to orient other telescopes on the same patch of sky in order to gather additional data.

Once per day, the raw data and metadata will be transmitted nearly 8000 kilometers to the Archive Center at the National Center for Supercomputing Applications at the University of Illinois at Urbana-Champaign, where it will be re-processed and merged into the archives.

Image courtesy of LSST.

The Archive Center will require 100 teraflops of processing power and the capacity for 15 petabytes of storage — at first.

“Once a year we have to take that data and re-process all of the accumulated images since the survey started, in one year. And so our processing requirements go up each year,” Kantor said. “Ultimately, that will require in excess of 250 teraflops of computing power, which is a fairly big chunk of computing capability.”

Scientists and citizens alike will be able to access the data in a variety of ways. Members of the LSST team are researching technologies that will assist them in deploying a science gateway where researchers can access the data and perform basic analyses. Kantor expects, however, that other organizations will want to establish their own portals, which LSST plans to support with open interfaces that comply with Virtual Observatory standards. Likewise, the software, which is all open source, runs on the TeraGrid.

“We are doing prototype implementations of the system right now, during our ‘R&D’ phase, and each year we do a fairly substantial software project and process terabytes of pre-cursor and simulated image data,” Kantor said. “We are using the TeraGrid for that purpose.”

More recently, the LSST team has begun to explore how they could use the resources offered by Open Science Grid.

“Many of our applications are what you’d call embarrassingly parallel,” Kantor explained. “My understanding is that it [OSG] has lots of locations that are well-suited to the embarrassingly parallel type of application.”

It’s still early days for the LSST, which is scheduled to complete its design and development phase in a few years and construction and commissioning within a decade. Over twenty years of preparation will culminate in a ten-year survey. Creating the telescope and infrastructure has certainly posed a set of pretty technical problems.

Said Kantor, “Why do we think we can do it? Because we’ve got pretty much world experts in every key area on the team, from petascale database to astronomical data processing algorithms.”