Particle physics and machine learning have long been intertwined.

One of the earliest examples of this relationship dates back to the 1960s, when physicists were using bubble chambers to search for particles invisible to the naked eye. These vessels were filled with a clear liquid that was heated to just below its boiling point so that even the slightest boost in energy—for example, from a charged particle crashing into it—would cause it to bubble, an event that would trigger a camera to take a photograph.

Female scanners often took on the job of inspecting these photographs for particle tracks. Physicist Paul Hough handed that task over to machines when he developed the Hough transform, a pattern recognition algorithm, to identify them.

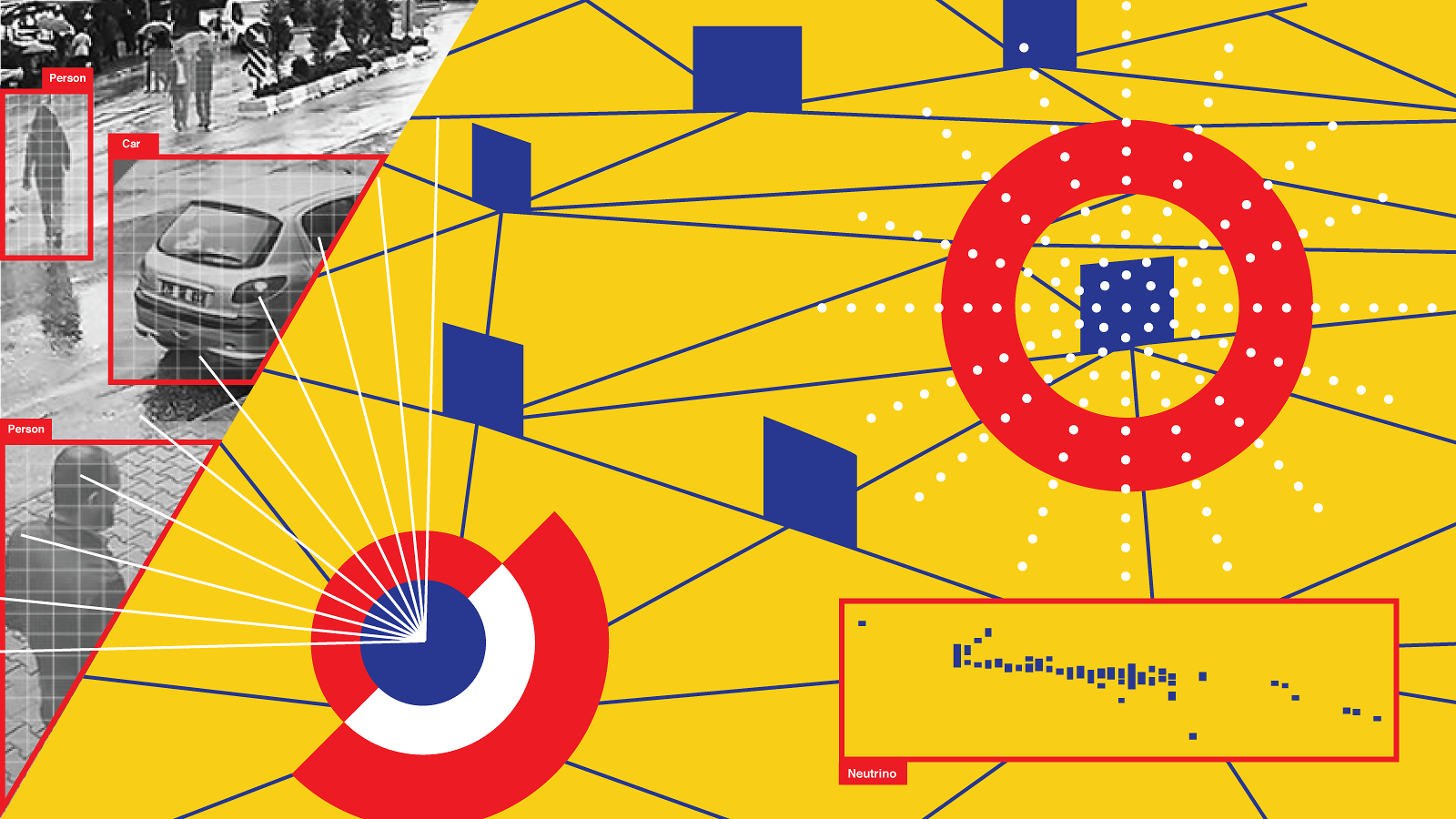

The computer science community later developed the Hough transform for use in applications such as computer vision, attempts to train computers to replicate the complex function of a human eye.

“There’s always been a little bit of back and forth” between these two communities, says Mark Messier, a physicist at Indiana University.

Since then, the field of machine learning has rapidly advanced. Deep learning, a form of artificial intelligence modeled after the human brain, has been implemented for a wide range of applications such as identifying faces, playing video games and even synthesizing life-like videos of politicians.

Over the years, algorithms that help scientists pick interesting aberrations out of background data have been used in physics experiments such as BaBar at SLAC National Accelerator Laboratory and experiments at the Large Electron-Positron Collider at CERN and the Tevatron at Fermi National Accelerator Laboratory. More recently, algorithms that learn to recognize patterns in large datasets have been handy for physicists studying hard-to-catch particles called neutrinos.

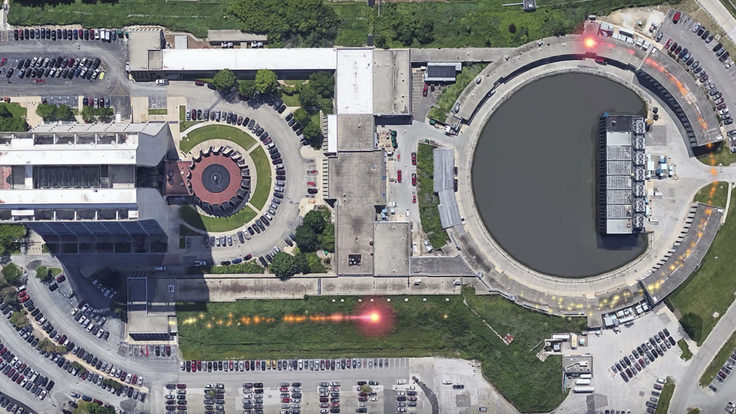

This includes scientists on the NOvA experiment, who study a beam of neutrinos created at the US Department of Energy’s Fermilab near Chicago. The neutrinos stream straight through Earth to a 14,000-metric-ton detector filled with liquid scintillator sitting near the Canadian border in Minnesota.

When a neutrino strikes the liquid scintillator, it releases a burst of particles. The detector collects information about the pattern and energy of those particles. Scientists use that information to figure out what happened in the original neutrino event.

“Our job is almost like reconstructing a crime scene,” Messier says. “A neutrino interacts and leaves traces in the detector—we come along afterward and use what we can see to try and figure out what we can about the identity of the neutrino.”

Over the last few years, scientists have started to use algorithms called convolutional neural networks (CNNs) to take on this task instead.

CNNs, which are modelled after the mammalian visual cortex, are widely used in the technology industry—for example, to improve computer vision for self-driving cars. These networks are composed of multiple layers that act somewhat like filters: They contain densely interconnected nodes that possess numerical values, or weights, that are adjusted and refined as inputs pass through.

“The ‘deep’ part comes from the fact that there are many layers to it,” explains Adam Aurisano, an assistant professor at the University of Cincinnati. “[With deep learning] you can take nearly raw data, and by pushing it through these stacks of learnable filters, you wind up extracting nearly optimal features.”

For example, these algorithms can extract details associated with particle interactions of varying complexity from the “images” collected by recording different patterns of energy deposits in particle detectors.

“Those stacks of filters have sort of sliced and diced the image and extracted physically meaningful bits of information that we would have tried to reconstruct before,” Aurisano says.

Although they can be used to classify events without recreating them, CNNs can also be used to reconstruct particle interactions using a method called semantic segmentation.

When applied to an image of a table, for example, this method would reconstruct the object by tagging each pixel associated with it, Aurisano explains. In the same way, scientists can label each pixel associated with characteristics of neutrino interactions, then use algorithms to reconstruct the event.

Physicists are using this method to analyze data collected from the MicroBooNE neutrino detector.

“The nice thing about this process is that you might find a cluster that’s made by your network that doesn’t fit in any interpretation in your model,” says Kazuhiro Terao, a scientist at SLAC National Accelerator Laboratory. “That might be new physics. So we could use these tools to find stuff that we might not understand.”

Scientists working on other particle physics experiments, such as those at the Large Hadron Collider at CERN, are also using deep learning for data analysis.

“All these big physics experiments are really very similar at the machine learning level,” says Pierre Baldi, a computer scientist at the University of California, Irvine. “It's all images associated with these complex, very expensive detectors, and deep learning is the best method for extracting signal against some background noise.”

Although most of the information is currently flowing from computer scientists to particle physicists, other communities may also gain new tools and insights from these experimental applications as well.

For example, according to Baldi, one question that’s currently being discussed is whether scientists can write software that works across all these physics experiments with a minimal amount of human tuning. If this goal were achieved, it could benefit other fields, such a biomedical imaging, that use deep learning as well. “[The algorithm] would look at the data and calibrate itself,” he says. “That’s an interesting challenge for machine learning methods.”

Another future direction, Terao says, would be to get machines to ask questions—or, more simply, to be able to identify outliers and try to figure out how to explain them.

“If the AI can form a question and come up with a logical sequence to solve it, then that replaces a human,” he says. “To me, the kind of AI you want to see is a physics researcher—one that can do scientific research.”