In the 1990s, an experiment conducted in Los Alamos, about 35 miles northwest of the capital of New Mexico, appeared to find something odd.

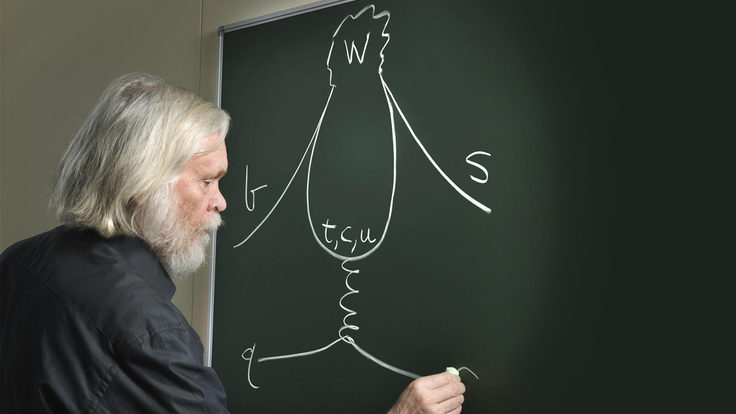

Scientists designed the Liquid Scintillator Neutrino Detector experiment at the US Department of Energy’s Los Alamos National Laboratory to count neutrinos, ghostly particles that come in three types and rarely interact with other matter. LSND was looking for evidence of neutrino oscillation, or neutrinos changing from one type to another.

Several previous experiments had seen indications of such oscillations, which show that neutrinos have small masses not incorporated into the Standard Model, the ruling theory of particle physics. LSND scientists wanted to double-check these earlier measurements.

By studying a nearly pure source of one type of neutrinos—muon neutrinos—LSND did find evidence of oscillation to a different type of neutrinos, electron neutrinos. However, they found many more electron neutrinos in their detector than predicted, creating a new puzzle.

This excess could have been a sign that neutrinos oscillate between not three but four different types, suggesting the existence of a possible new type of neutrino, called a sterile neutrino, which theorists had suggested as a possible way to incorporate tiny neutrino masses into the Standard Model.

Or there could be another explanation. The question is: What? And how can scientists guard against being fooled in physics?

Brand new thing

Many physicists are looking for results that go beyond the Standard Model. They come up with experiments to test its predictions; if what they find doesn’t match up, they have potentially discovered something new.

“Do we see what we expected from the calculations if all we have there is the Standard Model?” says Paris Sphicas, a researcher at CERN. “If the answer is yes, then it means we have nothing new. If the answer is no, then you have the next question, which is, ‘Is this within the uncertainties of our estimates? Could this be a result of a mistake in our estimates?’ And so on and so on.”

A long list of possible factors can trick scientists into thinking they’ve made a discovery. A big part of scientific research is identifying them and finding ways to test what’s really going on.

“The community standard for discovery is a high bar, and it ought to be,” says Yale University neutrino physicist Bonnie Fleming. “It takes time to really convince ourselves we’ve really found something.”

In the case of the LSND anomaly, scientists wonder whether unaccounted-for background events tipped the scales or if some sort of mechanical problem caused an error in the measurement.

Scientists have designed follow-up experiments to see if they can reproduce the result. An experiment called MiniBooNE, hosted by Fermi National Accelerator Laboratory, recently reported seeing signs of a similar excess. Other experiments, such as the MINOS experiment, also at Fermilab, have not seen it, complicating the search.

“[LSND and MiniBooNE] are clearly measuring an excess of events over what they expect,” says MINOS co-spokesperson Jenny Thomas, a physicist at University College London. “Are those important signal events, or are they a background they haven’t estimated properly? That’s what they are up against.”

Managing expectations

Much of the work in understanding a signal involves preparatory work before one is even seen.

In designing an experiment, researchers need to understand what physics processes can produce or mimic the signal being sought, events that are often referred to as “background.”

Physicists can predict backgrounds through simulations of experiments. Some types of detector backgrounds can be identified through “null tests,” such as pointing a telescope at a blank wall. Other backgrounds can be identified through tests with the data itself, such as so-called “jack-knife tests,” which involve splitting data into subsets—say, data from Monday and data from Tuesday—which by design must produce the same results. Any inconsistencies would warn scientists about a signal that appears in just one subset.

Researchers looking at a specific signal work to develop a deep understanding of what other physics processes could produce the same signature in their detector. MiniBooNE, for example, studies a beam primarily made of muon neutrinos to measure how often those neutrinos oscillate to other flavors. But it will occasionally pick up stray electron neutrinos, which look like muon neutrinos that have transformed. Beyond that, other physics processes can mimic the signal of an electron neutrino event.

“We know we’re going to be faked by those, so we have to do the best job to estimate how many of them there are,” Fleming says. “Whatever excess we find has to be in addition to those.”

Even more variable than a particle beam: human beings. While science strives to be an objective measurement of facts, the process itself is conducted by a collection of people whose actions can be colored by biases, personal stories and emotion. A preconceived notion that an experiment will (or won’t) produce a certain result, for example, could influence a researcher’s work in subtle ways.

“I think there’s a stereotype that scientists are somehow dispassionate, cold, calculating observers of reality,” says Brian Keating, an astrophysicist at University of California San Diego and author of the book Losing the Nobel Prize, which chronicles how the desire to make a prize-winning discovery can steer a scientist away from best practices. “In reality, the truth is we actually participate in it, and there are sociological elements at work that influence a human being. Scientists, despite the stereotypes, are very much human beings.”

Staying cognizant of this fact and incorporating methods for removing bias are especially important if a particular claim upends long-standing knowledge—such as, for example, our understanding of neutrinos. In these cases, scientists know to adhere to the adage: Extraordinary claims require extraordinary evidence.

“If you’re walking outside your house and you see a car, you probably think, ‘That’s a car,’” says Jonah Kanner, a research scientist at Caltech. “But if you see a dragon, you might think, ‘Is that really a dragon? Am I sure that’s a dragon?’ You’d want a higher level of evidence.”

Dragon or discovery?

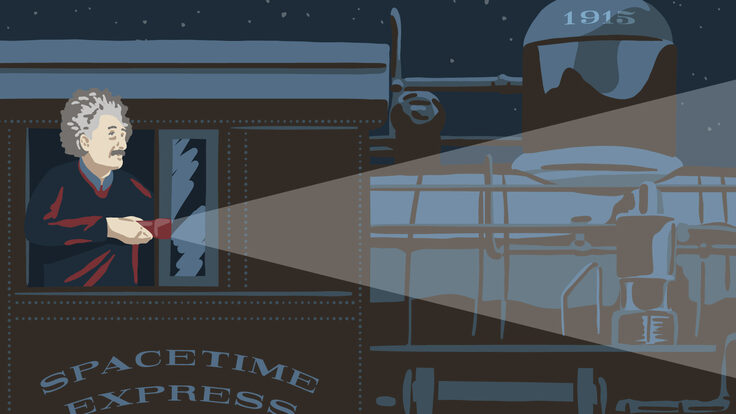

Physicists have been burned by dragons before. In 1969, for example, a scientist named Joe Weber announced that he had detected gravitational waves: ripples in the fabric of space-time first predicted by Albert Einstein in 1916. Such a detection, which many had thought was impossible to make, would have proved a key tenet of relativity. Weber rocketed to momentary fame, until other physicists found they could not replicate his results.

The false discovery rocked the gravitational wave community, which, over the decades, became increasingly cautious about making such announcements.

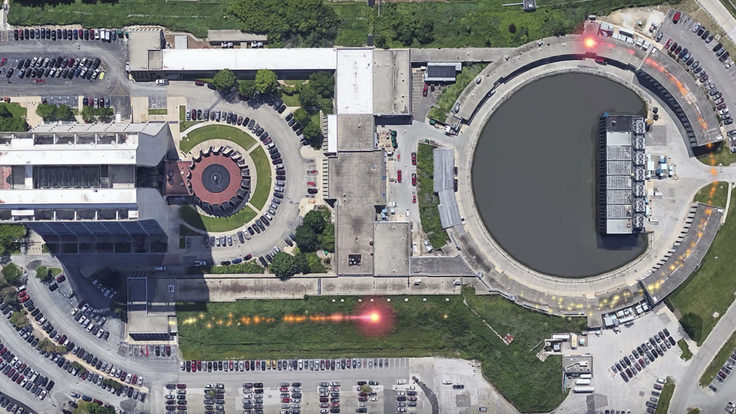

So in 2009, as the Laser Interferometer Gravitational Wave Observatory, or LIGO, came online for its next science run, the scientific collaboration came up with a way to make sure collaboration members stayed skeptical of their results. They developed a method of adding a false or simulated signal into the detector data stream without alerting the majority of the 800 or so researchers on the team. They called it a blind injection. The rest of the members knew an injection was possible, but not guaranteed.

“We’d been not detecting signals for 30 years,” Kanner, a member of the LIGO collaboration, says. “How clear or obvious would the signature have to be for everyone to believe it?... It forced us to push our algorithms and our statistics and our procedures, but also to test the sociology and see if we could get a group of people to agree on this.”

In late 2010, the team got the alert they had been waiting for: The computers detected a signal. For six months, hundreds of scientists analyzed the results, eventually concluding that the signal looked like gravitational waves. They wrote a paper detailing the evidence, and more than 400 team members voted on its approval. Then a senior member told them it had all been faked.

Picking out and spending so much time examining such an artificial signal may seem like a waste of time, but the test worked just as intended. The exercise forced the scientists to work through all of the ways they would need to scrutinize a real result before one ever came through. It forced the collaboration to develop new tests and approaches to demonstrating the consistency of a possible signal in advance of a real event.

“It was designed to keep us honest in a sense,” Kanner says. “Everyone to some extent goes in with some guess or expectation about what’s going to come out of that experiment. Part of the idea of the blind injection was to try and tip the scales on that bias, where our beliefs about whether we thought nature should produce an event would be less important.”

All of the hard work paid off: In September 2015, when an authentic signal hit the LIGO detectors, scientists knew what to do. In 2016, the collaboration announced the first confirmed direct detection of gravitational waves. One year later, the discovery won the Nobel Prize.

No easy answers

While blind injections worked for the gravitational waves community, each area of physics presents its own unique challenges.

Neutrino physicists have an extremely small sample size with which to work, because their particles interact so rarely. That’s why experiments such as the NOvA experiment and the upcoming Deep Underground Neutrino experiment use such enormous detectors.

Astronomers have even fewer samples: They have just one universe to study, and no way to conduct controlled experiments. That’s why they conduct decades-long surveys, to collect as much data as possible.

Researchers working at the Large Hadron Collider have no shortage of interactions to study—an estimated 600 million events are detected every second. But due to the enormous size, cost and complexity of the technology, scientists have built only one LHC. That’s why inside the collider sit multiple different detectors, which can check one another’s work by measuring the same things in a variety of ways with detectors of different designs.

While there are many central tenets to checking a result—knowing your experiment and background well, running simulations and checking that they agree with your data, testing alternative explanations of a suspected result—there’s no comprehensive checklist that every physicist performs. Strategies vary from experiment to experiment, among fields and over time.

Scientists must do everything they can to test a result, because in the end, it will need to stand up to the scrutiny of their peers. Fellow physicists will question the new result, subject it to their own analyses, try out alternative interpretations, and, ultimately, try to repeat the measurement in a different way. Especially if they’re dealing with dragons.