In a provocative section of a talk at the American Physical Society meeting in Washington, DC, yesterday, theorist Matthew Strassler from Rutgers University challenged particle theorists to not be too simple in their analyses. Most people would probably not claim that theoretical particle physics is too simple, but Strassler argued that nature is likely to be even more complicated than physicists expect. And if theorists only properly examine the simplest classes of models, where simple is a relative term, they might be led astray in interpreting future Large Hadron Collider data.

Physicists know that the Standard Model of particle physics is broken, but they don’t yet know how to fix it. The general approach is to augment the Standard Model with new particles, forces, or phenomena and see what those extended theories predict. Then when experimenters gather more data, they will be able to see which theories might best reflect reality.

As is natural, theorists start by examining the models that are the simplest extensions of the Standard Model. Those simplest extensions usually have the word minimal in their name, such as the Minimal Super Symmetric Model or the Minimal Technicolor Model.

“The preference for minimal models is unquestionably a cultural bias,” Strassler claimed. He said that many theorists argue that minimal is elegant which appears well-motivated and is therefore appreciated. He said that that line of argument works in converse also, where non-minimal models are considered inelegant, not well motivated, and therefore are unappreciated.

He gave several historical anecdotes of where minimalism has failed. When the muon, a heavy form of the electron, was discovered in 1936 and tipped off physicists to the existence of a second generation of leptons beyond the seemingly sufficient electron, Isidor Isaac Rabi asked in shock, “Who ordered that?”

Strassler gave other examples of non-minimalism such as the fact that neutrinos have non-zero mass and that there is dark energy, which he phrased in terms of the existence of a non-zero cosmological constant.

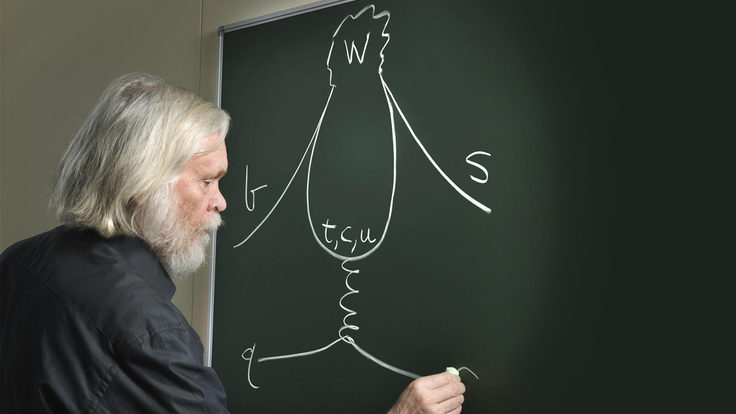

He argued that physicists are entering the post-minimal era where the potentially sufficient minimal theories so far developed, especially in the 1990s, will not be enough to describe what we see at the LHC. Strassler argued for the exploration of other theoretical frameworks, such as his own hidden valleys framework, which includes a new set of light neutral particles and new forces. He also mentioned frameworks by others, including “quirks”, “unparticles”, and the “WIMPless miracle”, all of which are non-minimal. These models might be tested with Tevatron and LHC data or even with existing data from the BaBar and Belle experiments. “Light, neutral particles could be a blind spot in our field,” he said. Strassler argued that low energy experiments at the intensity frontier will be vital in advancing theoretical understanding.

While there is no question among theorists they are not exploring all of the possibilities, some theorists and journal editors at the conference seemed unpersuaded by the argument on practical grounds. Theorists only have so much time and they don’t really know which direction to go in making more complicated theories. They have too many choices open to them and no reliable way to decide which might be most fruitful. One editor of Physical Review Letters commented that the journal wouldn’t typically be interested in papers which head off in a random direction with an extension of theories beyond the minimal models unless they showed some particularly dramatic signature in a detector, such as a paper last year that included a model which had a new type of force represented by a heavy particle called the Z’ which would decay into a very clear signature of six leptons (such as electrons and muons).

With the LHC expected to switch back on in the next week or so, the data will begin to flow quite rapidly, and, as discussed by many other presenters at the conference, results will rapidly begin to winnow down the theories that have been proposed in the past decades. Perhaps the most salient aspect of Strassler’s warning would apply if data has ruled out most existing models. It would argue that theorists shouldn’t be too quick to think that what remains is actually a true reflection of reality, unless they have dug much deeper into some of the more complicated models. Only then might they be assured that they are getting closer to painting a true picture of how nature works at its most fundamental level.