The World Wide Web may have been invented at CERN, but it was raised and cultivated abroad. Now a group of Large Hadron Collider physicists are looking outside academia to solve one of the biggest challenges in physics—creating a software framework that is sophisticated, sustainable and more compatible with rest of the world.

“The software we used to build the LHC and perform our analyses is 20 years old,” says Peter Elmer, a physicist at Princeton University. “Technology evolves, so we have to ask, does our software still make sense today? Will it still do what we need 20 or 30 years from now?”

Elmer is part of a new initiative funded by the National Science Foundation called the DIANA/HEP project, or Data Intensive ANAlysis for High Energy Physics. The DIANA project has one main goal: improve high-energy physics software by incorporating best practices and algorithms from other disciplines.

“We want to discourage physics from re-inventing the wheel,” says Kyle Cranmer, a physicist at New York University and co-founder of the DIANA project. “There has been an explosion of high-quality scientific software in recent years. We want to start incorporating the best products into our research so that we can perform better science more efficiently.”

DIANA is the first project explicitly funded to work on sustainable software, but not alone in the endeavor to improve the way high energy physicists perform their analyses. In 2010 physicist Noel Dawe started the rootpy project, a community-driven initiative to improve the interface between ROOT and Python.

“ROOT is the central tool that every physicist in my field uses,” says Dawe, who was a graduate student at Simon Fraser University when he started rootpy and is currently a fellow at the University of Melbourne. “It does quite a bit, but sometimes the best tool for the job is something else. I started rootpy as a side project when I was a graduate student because I wanted to find ways to interface ROOT code with other tools.”

Physicists began developing ROOT in the 1990s in the computing language C++. This software has evolved a lot since then, but has slowly become outdated, cumbersome and difficult to interface with new scientific tools written in languages such as Python or Julia. C++ has also evolved over the course of the last twenty years, but physicists must maintain a level of backward compatibility in order to preserve some of their older code.

“It’s in a bubble,” says Gilles Louppe, a machine learning expert working on the DIANA project. “It’s hard to get in and it’s hard to get out. It’s isolated from the rest of the world.”

Before coming to CERN, Louppe was a core developer of the machine learning platform scikit-learn, an open source library of versatile data mining and data analysis tools. He is now a postdoctoral researcher at New York University and working closely with physicists to improve the interoperability between common LHC software products and the scientific python ecosystem. Improved interoperability will make it easier for physicists to benefit from global advancements in machine learning and data analysis.

“Software and technology are changing so fast,” Cranmer says. “We can reap the rewards of industry and everything the world is coming up with.”

One trend that is spreading rapidly in the data science community is the computational notebook: a hybrid of analysis code, plots and narrative text. Project Jupyter is developing the technology that enables these notebooks. Two developers from the Jupyter team recently visited CERN to work with the ROOT team and further develop the ROOT version, ROOTbook.

“ROOTbooks represent a confluence of two communities and two technologies,” says Cranmer.

Physics patterns

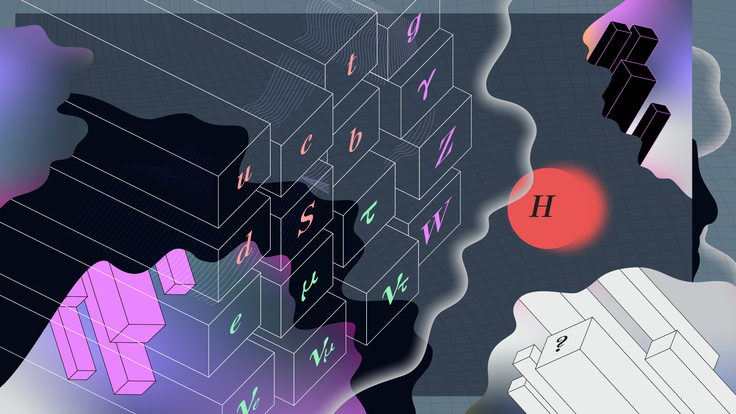

To perform tasks such as identifying and tagging particles, physicists use machine learning. They essentially train their LHC software to identify certain patterns in the data by feeding it thousands of simulations. According to Elmer, this task is like one big “needle in a haystack” problem.

“Imagine the book Where’s Waldo. But instead of just looking for one Waldo in one picture, there are many different kinds of Waldos and 100,000 pictures every second that need to be analyzed.”

But what if these programs could learn to recognize patterns on their own with only minimal guidance? One small step outside the LHC is a thriving multi-billion dollar industry doing just that.

“When I take a picture with my iPhone, it instantly interprets the thousands of pixels to identify people’s faces,” Elmer says. Companies like Facebook and Google are also incorporating more and more machine learning techniques to identify and catalogue information so that it is instantly accessible anywhere in the world.

Organizations such as Google, Facebook and Russia’s Yandex are releasing more and more tools as open source. Scientists in other disciplines, such as astronomy, are incorporating these tools into the way they do science. Cranmer hopes that high-energy physics will move to a model that makes it easier to take advantage of these new offerings as well.

“New software can expand the reach of what we can do at the LHC,” Cranmer says. “The potential is hard to guess.”