In the 1960s physicists at the University of California, Berkeley saw evidence of new, unexpected particles popping up in data from their bubble chamber experiments.

But before throwing a party, the scientists did another experiment. They repeated their analysis, but instead of using the real data from the bubble chamber, they used fake data generated by a computer program, which assumed there were no new particles.

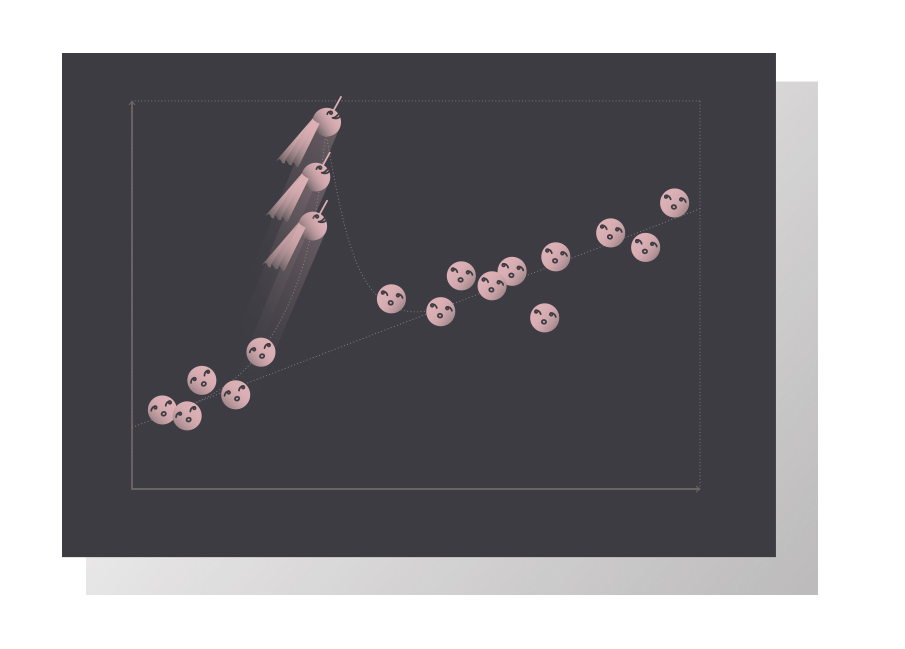

The scientists performed a statistical analysis on both sets of data, printed the histograms, pinned them to the wall of the physics lounge, and asked visitors to identify which plots showed the new particles and which plots were fakes.

No one could tell the difference. The fake plots had just as many impressive deviations from the theoretical predictions as the real plots.

Eventually, the scientists determined that some of the unexpected bumps in the real data were the fingerprints of new composite particles. But the bumps in the fake data remained the result of random statistical fluctuations.

So how do scientists differentiate between random statistical fluctuations and real discoveries?

Just like a baseball analyst can’t judge if a rookie is the next Babe Ruth after nine innings of play, physicists won’t claim a discovery until they know that their little bump-on-a-graph is the real deal.

After the histogram “social experiment” at Berkeley, scientists developed a one-size-fits-all rule to separate the “Hall of Fame” discoveries from the “few good games” anomalies: the five-sigma threshold.

“Five sigma is a measure of probability,” says Kyle Cranmer, a physicist from New York University working on the ATLAS experiment. “It means that if a bump in the data is the result of random statistical fluctuation and not the consequence of some new property of nature, then we could expect to see a bump at least this big again only if we repeated our experiment a few million more times.”

To put it another way, five sigma means that there is only a 0.00003 percent chance scientists would see this result due to statistical fluctuations alone—a good indication that there’s probably something hiding under that bump.

But the five-sigma threshold is more of a guideline than a golden rule, and it does not tell physicists whether they have made a discovery, according to Bob Cousins, a physicist at the University of California, Los Angeles working on the CMS experiment.

“A few years ago scientists posted a paper claiming that they had seen faster-than-light neutrinos,” Cousins says. But few people seemed to believe it—even though the result was six sigma. (A six-sigma result is a couple of hundred times stronger than a five-sigma result.)

The five-sigma rule is typically used as the standard for discovery in high-energy physics, but it does not incorporate another equally important scientific mantra: The more extraordinary the claim, the more evidence you need to convince the community.

“No one was arguing about the statistics behind the faster-than-light neutrinos observation,” Cranmer says. “But hardly anyone believed they got that result because the neutrinos were actually going faster than light.”

Within minutes of the announcement, physicists started dissecting every detail of the experiment to unearth an explanation. Anticlimactically, it turned out to be a loose fiber optic cable.

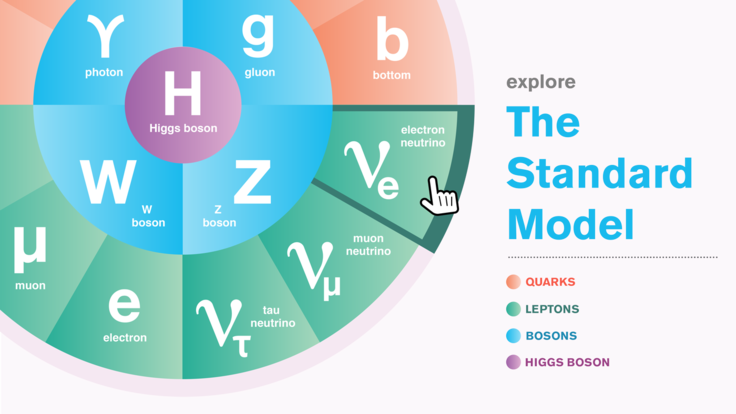

The “extraordinary claims, extraordinary evidence” philosophy also holds true for the inverse of the statement: If you see something you expected, then you don’t need as much evidence to claim a discovery. Physicists will sometime relax their stringent statistical standards if they are verifying processes predicted by the Standard Model of particle physics—a thoroughly vetted description of the microscopic world.

“But if you don’t have a well-defined hypothesis that you are testing, you increase your chances of finding something that looks impressive just because you are looking everywhere,” Cousins says. “If you perform 800 broad searches across huge mass ranges for new particles, you’re likely to see at least one impressive three-sigma bump that isn’t anything at all.”

In the end, there is no one-size-fits-all rule that separates discoveries from fluctuations. Two scientists could look at the same data, make the same histograms and still come to completely different conclusions.

So which results windup in textbooks and which results are buried in the archive?

“This decision comes down to two personal questions: What was your prior belief, and what is the cost of making an error?” Cousins says. “With the Higgs discovery, we waited until we had overwhelming evidence of a Higgs-like particle before announcing the discovery, because if we made an error it could weaken people’s confidence in the LHC research program.”

Experimental physicists have another way of verifying their results before making a discovery claim: comparable studies from independent experiments.

“If one experiment sees something but another experiment with similar capabilities doesn’t, the first thing we would do is find out why,” Cranmer says. “People won’t fully believe a discovery claim without a solid cross check.”