For physicists trying to harness the power of electricity, no tool was more important than the vacuum tube. This lightbulb-like device controlled the flow of electricity and could amplify signals. In the early 20th century, vacuum tubes were used in radios, televisions and long-distance telephone networks.

But vacuum tubes had significant drawbacks: They generated heat; they were bulky; and they had a propensity to burn out. Physicists at Bell Labs, a spin-off of AT&T, were interested in finding a replacement.

Applying their knowledge of quantum mechanics—specifically how electrons flowed between materials with electrical conductivity—they found a way to mimic the function of vacuum tubes without those shortcomings.

They had invented the transistor. At the time, the invention did not grace the front page of any major news publications. Even the scientists themselves couldn’t have appreciated just how important their device would be.

“At the dawn of the 20th century, a new theory of matter and energy was emerging.”

First came the transistor radio, popularized in large part by the new Japanese company Sony. Spreading portable access to radio broadcasts changed music and connected disparate corners of the world.

Transistors then paved the way for NASA’s Apollo Project, which first took humans to the moon. And perhaps most importantly, transistors were made smaller and smaller, shrinking room-sized computers and magnifying their power to eventually create laptops and smartphones.

These quantum-inspired devices are central to every single modern electronic application that uses some computing power, such as cars, cellphones and digital cameras. You would not be reading this sentence without transistors, which are an important part of what is now called the first quantum revolution.

Quantum physicists Jonathan Dowling and Gerard Milburn coined the term “quantum revolution” in a 2002 paper. In it, they argue that we have now entered a new era, a second quantum revolution. “It just dawned on me that actually there was a whole new technological frontier opening up,” says Milburn, professor emeritus at the University of Queensland.

This second quantum revolution is defined by developments in technologies like quantum computing and quantum sensing, brought on by a deeper understanding of the quantum world and precision control down to the level of individual particles.

A quantum understanding

At the dawn of the 20th century, a new theory of matter and energy was emerging. Unsatisfied with classical explanations about the strange behavior of particles, physicists developed a new system of mechanics to describe what seemed to be a quantized, uncertain, probabilistic world.

One of the main questions quantum mechanics addressed was the nature of light. Eighteenth-century physicists believed light was a particle. Nineteenth-century physicists proved it had to be a wave. Twentieth-century physicists resolved the problem by redefining particles using the principles of quantum mechanics. They proposed that particles of light, now called photons, had some probability of existing in a given location—a probability that could be represented as a wave and even experience interference like one.

This newfound picture of the world helped make sense of results such as those of the double-slit experiment, which showed that particles like electrons and photons could behave as if they were waves.

But could a quantum worldview prove useful outside the lab?

At first, “quantum was usually seen as just a source of mystery and confusion and all sorts of strange paradoxes,” Milburn says.

But after World War II, people began figuring out how to use those paradoxes to get things done. Building on new quantum ideas about the behavior of electrons in metals and other materials, Bell Labs researchers William Shockley, John Bardeen and Walter Brattain created the first transistors. They realized that sandwiching semiconductors together could create a device that would allow electrical current to flow in one direction, but not another. Other technologies, such as atomic clocks and the nuclear magnetic resonance used for MRI scans, were also products of the first quantum revolution.

Another important and, well, visible quantum invention was the laser.

In the 1950s, optical physicists knew that hitting certain kinds of atoms with a few photons at the right energy could lead them to emit more photons with the same energy and direction as the initial photons. This effect would cause a cascade of photons, creating a stable, straight beam of light unlike anything seen in nature. Today, lasers are ubiquitous, used in applications from laser pointers to barcode scanners to life-saving medical techniques.

All of these devices were made possible by studies of the quantum world. Both the laser and transistor rely on an understanding of quantized atomic energy levels. Milburn and Dowling suggest that the technologies of the first quantum revolution are unified by “the idea that matter particles sometimes behaved like waves, and that light waves sometimes acted like particles.”

For the first time, scientists were using their understanding of quantum mechanics to create new tools that could be used in the classical world.

The second quantum revolution

Many of these developments were described to the public without resorting to the word “quantum,” as this Bell Labs video about the laser attests.

One reason for the disconnect was that the first quantum revolution didn’t make full use of quantum mechanics. “The systems were too noisy. In a sense, the full richness of quantum mechanics wasn't really accessible,” says Ivan Deutsch, a quantum physicist at the University of New Mexico. “You can get by with a fairly classical picture.”

The stage for the second quantum revolution was set in the 1960s, when the North Irish physicist John Stewart Bell shook the foundations of quantum mechanics. Bell proposed that entangled particles were correlated in strange quantum ways and could not be explained with so-called “hidden variables.” Tests performed in the ’70s and ’80s confirmed that measuring one entangled particle really did seem to determine the state of the other, faster than any signal could travel between the two.

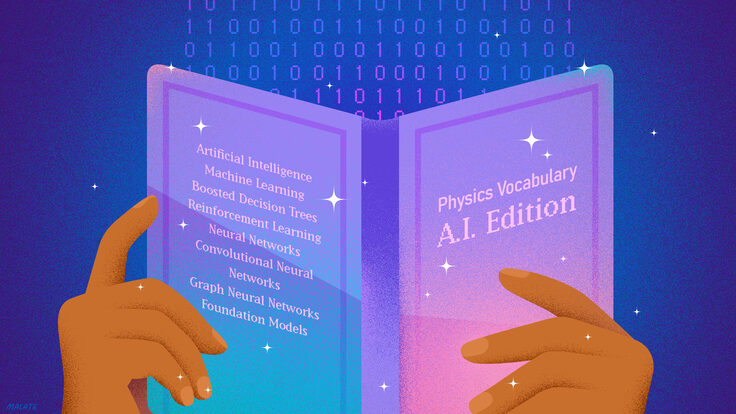

The other critical ingredient for the second quantum revolution was information theory, a blend of math and computer science developed by pioneers like Claude Shannon and Alan Turing. In 1994, combining new insight into the foundations of quantum mechanics with information theory led the mathematician Peter Shor to introduce a fast-factoring algorithm for a quantum computer, a computer whose bits exist in superposition and can be entangled.

Shor’s algorithm was designed to quickly divide large numbers into their prime factors. Using the algorithm, a quantum computer could solve the problem much more efficiently than a classical one. It was the clearest early demonstration of the worth of quantum computing.

“It really made the whole idea of quantum information, a new concept that those of us who had been working in related areas, instantly appreciated,” Deutsch says. “Shor’s algorithm suggested the possibilities new quantum tech could have over existing classical tech, galvanizing research across the board."

Shor’s algorithm is of particular interest in encryption because the difficulty of identifying the prime factors of large numbers is precisely what keeps data private online. To unlock encrypted information, a computer must know the prime factors of a large number associated with it. Use a large enough number, and the puzzle of guessing its prime factors can take a classical computer thousands of years. With Shor’s algorithm, the guessing game can take just moments.

Today’s quantum computers are not yet advanced enough to implement Shor’s algorithm. But as Deutsch points out, skeptics once doubted a quantum computer was even possible.

“Because there was a kind of trade-off,” he says. “The kind of exponential increase in computational power that might come from quantum superpositions would be counteracted exactly, by exponential sensitivity to noise.”

While inventions like the transistor required knowledge of quantum mechanics, the device itself wasn’t in a delicate quantum state, so it could be described semi-classically. Quantum computers, on the other hand, require delicate quantum connections.

What changed was Shor’s introduction of error-correcting codes. By combining concepts from classical information theory with quantum mechanics, Shor showed that, in theory, even the delicate state of a quantum computer could be preserved.

Beyond quantum computing, the second quantum revolution also relies on and encompasses new ways of using technology to manipulate matter at the quantum level.

Using lasers, researchers have learned to sap the energy of atoms and cool them. Like a soccer player dribbling a ball up field with a series of taps, lasers can cool atoms to billionths of a degree above absolute zero—far colder than conventional cooling techniques. In 1995, scientists used laser cooling to observe a long-predicted state of matter: the Bose-Einstein condensate.

Other quantum optical techniques have been developed to make ultra-precise measurements.

Classical interferometers, like the type used in the famous Michelson-Morley experiment that measured the speed of light in different directions to search for signs of a hypothetical aether, looked at the interference pattern of light. New matter-wave interferometers exploit the principle that everything—not just light—has a wavefunction. Measuring changes in the phase of atoms, which have far shorter wavelengths than light, could give unprecedented control to experiments that attempt to measure the smallest effects, like those of gravity.

With laboratories and companies around the world focused on advancements in quantum science and applications, the second quantum revolution has only begun. As Bardeen put it in his Nobel lecture, we may be at another “particularly opportune time ... to add another small step in the control of nature for the benefit of [hu]mankind.”