When do a few scattered dots become a line? And when does that line become a particle track? For decades, physicists have been asking these kinds of questions. Today, so are their machines.

Machine learning is the process by which the task of pattern recognition is outsourced to a computer algorithm. Humans are naturally very good at finding and processing patterns. That’s why you can instantly recognize a song from your favorite band, even if you’ve never heard it before.

Machine learning takes this very human process and puts computing power behind it. Whereas a human might be able to recognize a band based on a variety of attributes such as the vocal tenor of the lead singer, a computer can process other subtle features a human might miss. The music-streaming platform Pandora categorizes every piece of music in terms of 450 different auditory qualities.

“Machines can handle a lot more information than our brains can,” says Eduardo Rodrigues, a physicist at the University of Cincinnati. “It’s why they can find patterns that are sometimes invisible to us.”

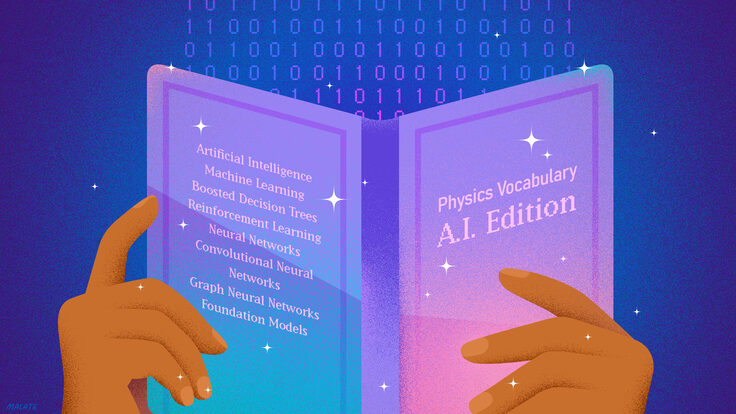

Machine learning started to become commonplace in computing during the 1980s, and LHC physicists have been using it routinely to help to manage and process raw data since 2012. Now, with upgrades to what is already the world’s most powerful particle accelerator looming on the horizon, physicists are implementing new applications of machine learning to help them with the imminent data deluge.

“The high-luminosity upgrade to the LHC is going to increase our amount of data by a factor of 100 relative to that used to discover the Higgs,” says Peter Elmer, a physicist at Princeton University. “This will help us search for rare particles and new physics, but if we’re not prepared, we risk being completely swamped with data.”

Only a small fraction of the LHC’s collisions are interesting to scientists. For instance, Higgs bosons are born in just roughly one out of every 2 billion proton-proton collisions. Machine learning is helping scientists to sort through the noise and isolate what’s truly important.

“It’s like mining for rare gems,” Rodrigues says. “Keeping all the sand and pebbles would be ridiculous, so we use algorithms to help us single out the things that look interesting. With machine learning, we can purify the sample even further and more efficiently.”

LHC physicists use a kind of machine learning called supervised learning. The principle behind supervised learning is nothing new; in fact, it’s how most of us learn how to read and write. Physicists start by training their machine-learning algorithms with data from collisions that are already well-understood. They tell them, “This is what a Higgs looks like. This is what a particle with a bottom quark looks like.”

After giving an algorithm all of the information they already know about hundreds of examples, physicists then pull back and task the computer with identifying the particles in collisions without labels. They monitor how well the algorithm performs and give corrections along the way. Eventually, the computer needs only minimal guidance and can become even better than humans at analyzing the data.

“This is saving the LHCb experiment a huge amount of time,” Rodrigues says. “In the past, we needed months to make sense of our raw detector data. With machine learning, we can now process and label events within the first few hours after we record them.”

Not only is machine learning helping physicists understand their real data, but it will soon help them create simulations to test their predictions from theory as well.

Using algorithms in the absence of machine learning, scientists have created virtual versions of their detectors with all the known laws of physics pre-programmed.

“The virtual experiment follows the known laws of physics to a T,” Elmer says. “We simulate proton-proton collisions and then predict how the byproducts will interact with every part of our detector.”

If scientists find a consistent discrepancy between the virtual data generated by their simulations and the real data recorded by their detectors, it could mean that the particles in the real world are playing by a different set of rules than the ones physicists already know.

A weakness of scientists’ current simulations is that they’re too slow. They use series of algorithms to precisely calculate how a particle will interact with every detector part it bumps into while moving through the many layers of a particle detector.

Even though it takes only a few minutes to simulate a collision this way, scientists need to simulate trillions of collisions to cover the possible outcomes of the 600 million collisions per second they will record with the HL-LHC.

“We don’t have the time or resources for that,” Elmer says.

With machine learning, on the other hand, they can generalize. Instead of calculating every single particle interaction with matter along the way, they can estimate its overall behavior based on its typical paths through the detector.

“It’s a matter of balancing quality with quantity,” Elmer says. “We’ll still use the very precise calculations for some studies. But for others, we don’t need such high-resolution simulations for the physics we want to do.”

Machine learning is helping scientists process more data faster. With the planned upgrades to the LHC, it could play an even large role in the future. But it is not a silver bullet, Elmer says.

“We still want to understand why and how all of our analyses work so that we can be completely confident in the results they produce,” he says. “We’ll always need a balance between shiny new technologies and our more traditional analysis techniques.”