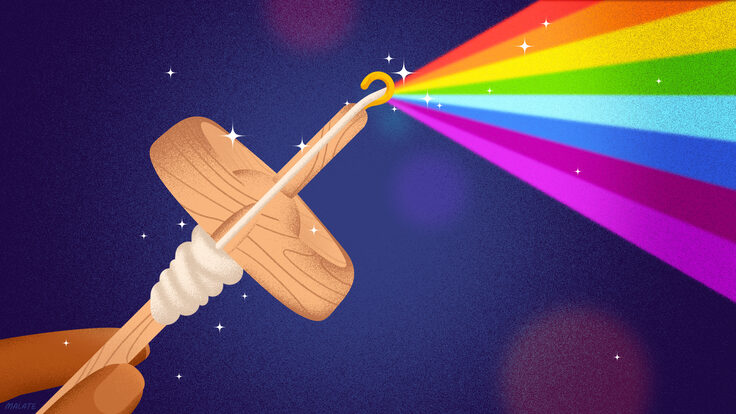

A still from a simulated animation of a Type 1a supernova. Click image to see a low-res version of the animation (avi format). Image and animation courtesy of flash.uchicago.edu.

What is matter? Where did it come from? What is the future of the universe? To answer these compelling questions, astrophysicists are trying to learn more about the physics of the big bang, and the origin of structure--the formation of the initial clumps of matter from the primordial soup. Computational tools and resources are indispensable to pursuing these fundamental questions.

Robert Rosner, director of Argonne National Laboratory and professor at the University of Chicago, spoke Friday, February 13 at the AAAS conference in Chicago about the role of simulation in studying the origins and evolution of the universe.

Direct observation of the cosmos has uncovered a host of facts. For example, the universe is expanding from the big bang and its expansion is accelerating. But observation will only take us so far, said Rosner. Scientists need to use theory to construct possible ‘scenarios’, and test them via experiments at particle accelerator laboratories and via computer simulations. Rosner presented simulated animations from a couple of important projects as examples of the use of simulation in astrophysics.

The Millennium Simulation Project is helping to clarify the physical processes underlying the buildup of real galaxies and black holes. It has traced the evolution of the matter distribution in a cubic region of the universe over 2 billion light-years on a side. According to its Web site, this simulation kept the principal supercomputer at the Max Planck Society's Leibniz Supercomputing Center in Garching, Germany busy for more than a month.

The ASC/Alliances Center for Astrophysical Thermonuclear Flashes at the University of Chicago runs simulations to solve the problem of thermonuclear explosions on the surfaces of compact stars. Their simulations of Type Ia supernovae, exploding white dwarf stars, have shown that an internal flame ‘bubble’ emerges at a point on the stellar surface, leading to surface waves that converge at the opposite point, and causing a shock and subsequent detonation of the entire star. Previously, scientists thought that the original flame would directly transition to a detonation. Based only on well-known physical processes, these simulations exemplify the potential of numerical simulations for scientific discovery.

Rosner noted that computing is moving towards the exascale (processing power of over 1018 FLOPS). He compared the current transition in computational methods and capabilities to the early 1990s when scientists moved from vector machines (such as the Cray) to massively parallel computers. At that time, the challenge was to modify existing codes optimized for vector machines to run efficiently on massively parallel machines; the new challenge is that of resource diversity across the network--how to construct algorithms that are flexible enough to efficiently exploit a variety of resource types, such as multi-core and heterogeneous processor computer architectures.

“For instance, we’re learning that for heterogeneous systems the MPI programming model, a standard for message passing between distinct tasks running concurrently on a computer, may not work well,” Rosner said. “Do we go back to multithreading and OpenMP from the early 90s? Are new algorithms needed? We may well have to change the way we program once again; and given our huge investments in existing codes, this will be a huge challenge.”

“The future is stunningly exciting,” said Rosner. “When we get to exascale computing we can capture the visible universe and we will understand how the observed structure came to be. We’ll be able to reach more reliable conclusions about the fate of our universe.”

This story first appeared in International Science Grid This Week.